- Solutions

- Use cases

- In-cabinEye gaze on road, hands on wheel, drowsy driver.

- Smart-officeAttention analysis, room interaction, engagement analysis.

- Fitness applicationsAvatar creation, hand tracking recognition, egocentric recognition.

- SecurityFalling people, package delivery, person detection.

- CosmeticsFacial recognition, head pose estimation, eye gaze estimation.

- Facial applicationsFacial recognition, virtual try-on, IoT and more.

- { Documentation }

- Resources

- Company

- Solutions

- Our Data

- Platform

- API

- Industries

- In-cabin

- Smart-office

- Security

- Fitness applications

- Cosmetics

- Facial applications

- Use cases

The exact data your model needs

Empowering engineers to generate cutting edge human-centric synthetic data for computer vision

Granular control

with APIPerfect

ground truthPrivacy

compliantTrusted By

Better models, faster time to production

Customized to your industry

In-cabin automotive

Learn moreOur data empowers the automotive industry to efficiently and effectively develop models for in-cabin scenarios. Cover edge case scenarios and eliminate the need for costly data collection methods.

Security

Learn moreWith accurate representations of home and building environments, our data has advanced motion and human-object interactions for the development of security systems.

Smart office

Learn moreOur data enables engineers to understand human behavior in work environments, whether the home or the office. The next generation of smart conferencing is powered by varied and realistic synthetic data.

Fitness

Learn moreGenerate accurate representations of people exercising at home and in the gym for robust health and fitness models. Our data covers a large variety of scenarios, including edge cases with broad variance.

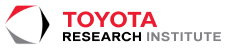

Cosmetics

Learn moreOur photorealistic faces empower the cosmetics industry with over 50k high quality, unique identities for testing and training models for a variety of applications. Our data is privacy compliant and comes with granular control over many parameters.

Facial applications

Facial analysis applicationsOur synthetic data accelerates your time to production for many consumer applications like virtual try-on, IoT, face recognition and more. Our data is flexible and can be customized for your exact data needs.

We support your vision use case

Facial recognitionFace and hair segmentationEye gaze estimationAction recognitionFull body segmentationObject

detectionFull body pose estimationHead pose estimationThe power of our synthetic data

>90%

Less real data required

>10%

Improvement in model accuracy

2x

Time

to productionGet started with Datagen

We care about your data

And we would love to use cookies to make your experience better. Our privacy policy.

Contact us - Solutions

Learn more about synthetic dataNew to computer vision? - Company

Dev resourcesGet started with our code templates - Resources

Our data tailored for your industryChoose your computer vision taskFace and hair segmentationDesign the exact dataset you need for your computer vision task.

Our dataFull body humans in contextGenerate fully annotated, pixel perfect full body humans and realistic scenes of humans interacting with objects in various environments.Data generation and managementAPIOur API enables you to request the exact dataset you need for your use case by giving you full control over single parametres through code.PlatformOur platform enables you to manage, preview and share your data within your team.